Project Overview

This cross-medial generative art installation transforms the global pulse of human knowledge into a real-time audiovisual experience. By connecting to the live stream of Wikipedia edits, the system translates abstract metadata—such as byte size, user type, and edit wars—into organic soundscapes and dynamic particle visualizations.

The project treats the Wikipedia API not just as a data source, but as a generative seed. A core research focus lies in the aesthetic distinction between human contributions (mapped to warm, organic subtractive synthesis) and bot activity (represented by precise, cold digital chatter). It explores how invisible digital infrastructure can be made perceptible through sensory translation.

Technical Features

The project was developed in two distinct implementations to explore different technical ecosystems:

1. Public Web Installation (JavaScript / WebGL)

- Browser-Based Real-time Processing: Direct connection to the Wikimedia EventStream via Server-Sent Events (SSE) handled entirely client-side.

- 3D Particle Engine: A high-performance visualization using Three.js (WebGL) to render edits as decaying light particles in a 3D space.

- Web Audio Synthesis: A custom-built subtractive synthesis engine using the Web Audio API to recreate the sonic characteristics of the SuperCollider prototype directly in the browser.

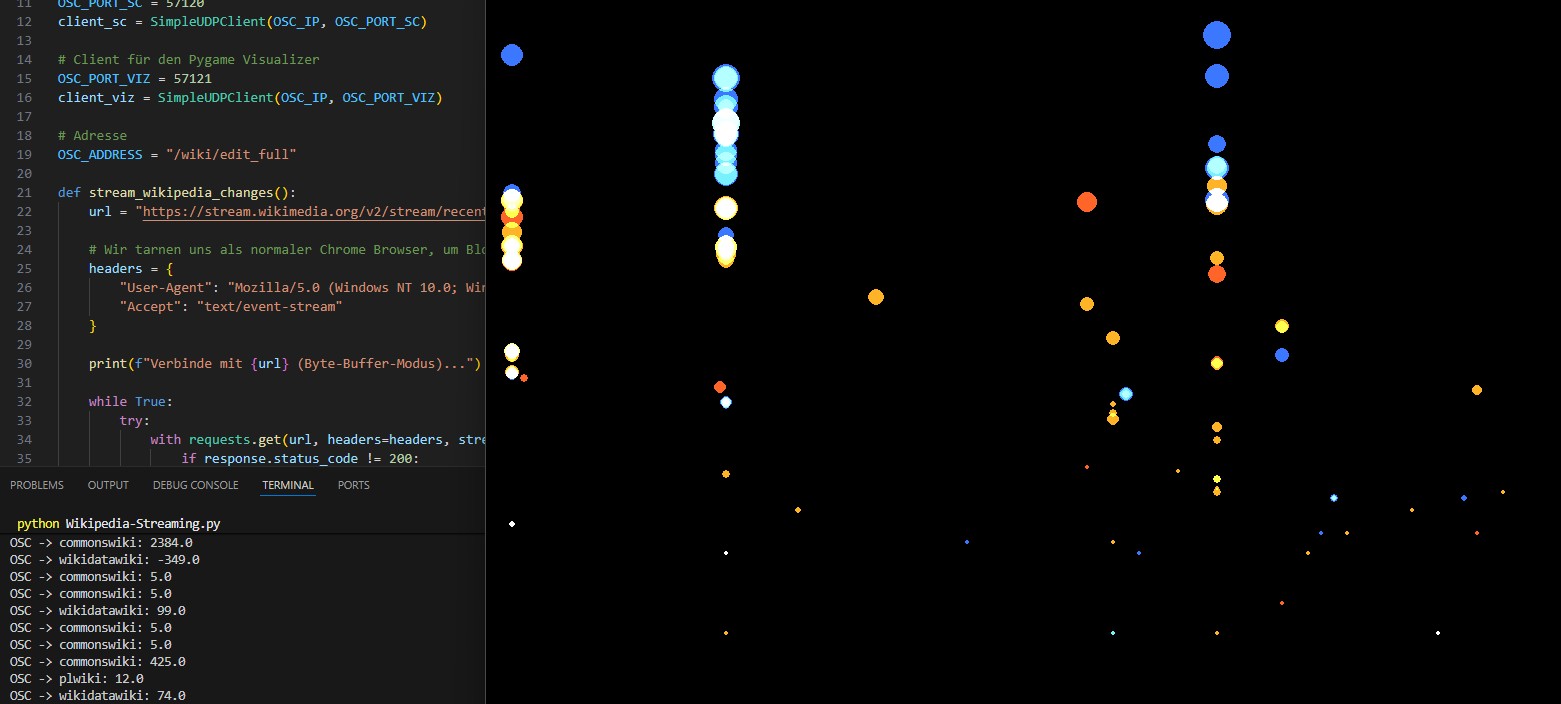

2. Research Prototype (Python / SuperCollider)

- Distributed Architecture: A modular Publish–Subscribe system using OSC (Open Sound Control) via UDP for low-latency communication between distinct processes.

- Algorithmic Mapping: Complex translation logic mapping text metadata (e.g., article title length) to microtonal frequencies and visual coordinates.

- High-End Audio Engine: A sample-free, generative synthesis engine built in SuperCollider, featuring dynamic reverb tails based on edit magnitude.

Tech Stack

Web Implementation

- Core: JavaScript (ES6+), Server-Sent Events (SSE)

- Visuals: Three.js (WebGL)

- Audio: Web Audio API

Local Research Version

- Core Logic: Python 3.10+ (requests, python-osc)

- Visuals: Pygame

- Audio Engine: SuperCollider (sclang)

- Data Source: Wikimedia EventStreams API (Server-Sent Events)